What Makes People Use One LLM Over Another

The most popular LLMs for consumers all work the same way. A chatbot. A prompt. A response. They can help you brainstorm, perfect code and generate images that are sometimes indistinguishable from real photos. If the big 3, Gemini, Claude and ChatGPT, can do all of these things, why are people drawn to one over the other?

It's not the benchmarks. Nobody reads those. It's something older and less rational. It's the same psychology that makes someone loyal to a Blank Street Coffee or a Tesla. We are not objective consumers of AI. We are human ones. And humans are messy.

ChatGPT got there first. That's most of the story.

ChatGPT didn't just launch in 2022, it launched a niche. It gave millions of people their first experience of what AI assistance could feel like; and that first experience became the blueprint for everything to follow.

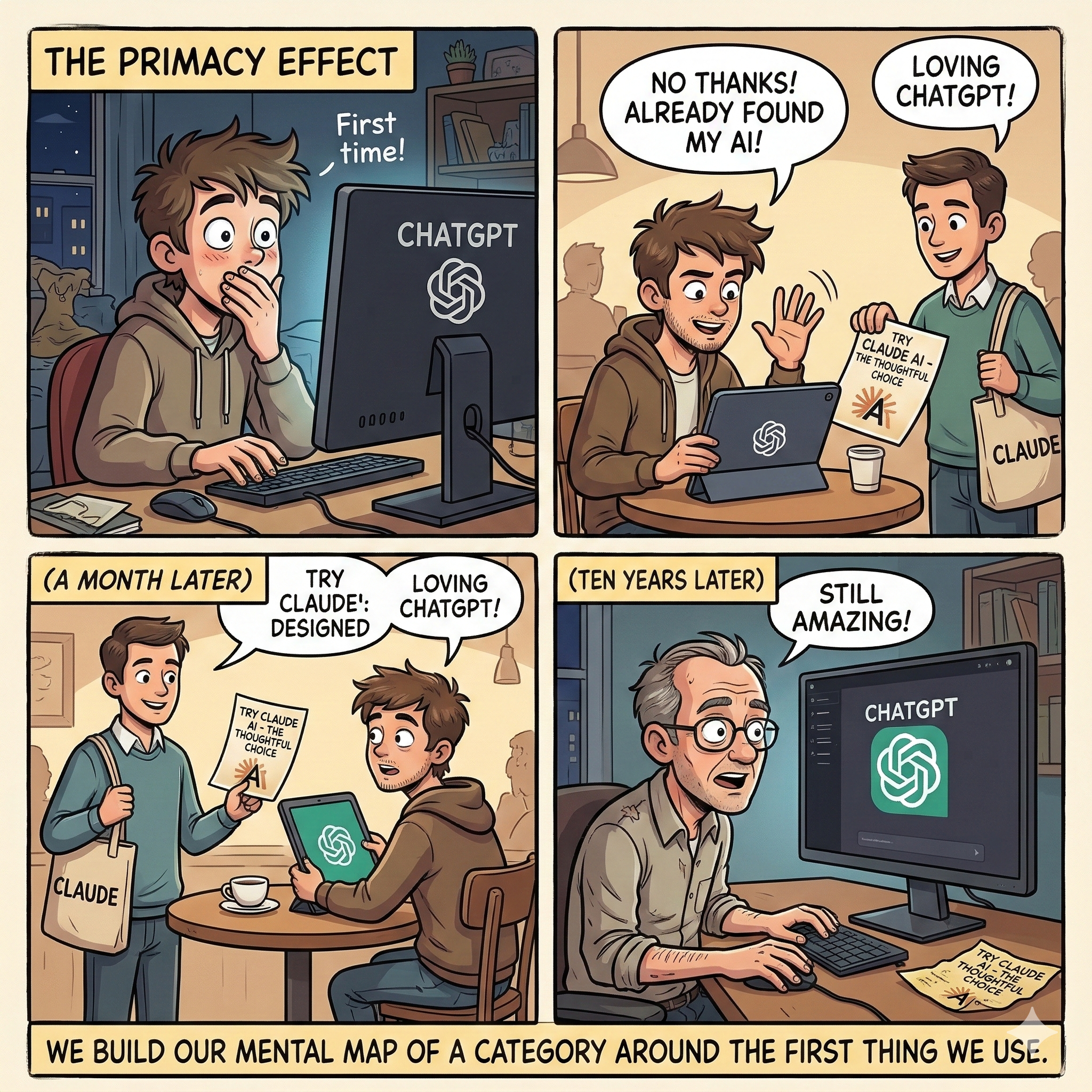

Psychologists call this the primacy effect. The first thing you encounter in a new category shapes your mental model of how that category works. If ChatGPT was your first LLM, you built your understanding of what AI is around its interface, its tone, its patterns. Switching isn't just inconvenient, it creates more cognitive friction. You have to rebuild the mental map.

ChatGPT now handles 2.5 to 3 billion prompts daily, averaging 16 minutes of use per person per day. SQ Magazine Those numbers aren't purely about quality. They're about habit. And habit is the most underrated moat in any market.

Anthropic was built out of fear. That matters.

Anthropic wasn't founded to win the AI race. It was the result of several people who were closest to the frontier, including CEO Dario Amodei, who left OpenAI in 2021, who became genuinely alarmed by how fast things were moving relative to how well anyone understood what they were building. They decided to build a company founded on the uncomfortable premise that the most capable AI systems might also be the most dangerous ones.

That origin story has been baked into Claude. Their approach is to train models against a set of principles rather than purely optimising for user approval, resulting in something you can feel in the product even if you can't articulate it. When we look at the stats, we can see that Claude is used heavily for work-related productivity. 36% of Claude usage relates to computer programming, compared to just 4.2% for ChatGPT. Fortune That's not a coincidence. Anthropic's public philosophy (careful, rigorous, ethics-first) attracts a specific kind of person, and the product reflects who built it.

Beyond the philosophy, there's something else going on. For me, OpenAI has started to feel a little too corporate. The kind of corporate that comes with enterprise sales decks, Microsoft integrations baked into every Office product, and a general sense that the product exists to serve a business model as much as a user. That creep is subtle. You don't always notice it happening until you spend time somewhere that doesn't feel that way.

Anthropic made that contrast explicit at this year's Super Bowl. While OpenAI was announcing a $200 million Pentagon contract, Anthropic ran ads that quietly pointed out something their competitor had recently confirmed: ChatGPT would be adding advertisements to its interface. Claude wouldn't. It was a small detail delivered with a light touch; but it landed, because there's something genuinely unsettling about an AI that's simultaneously offering you advice and being paid to shape it. The moment ads enter the chat, the relationship changes. You stop being the user and start being the product. Anthropic bet that people would notice that distinction. And enough of them did. Free active users on Claude increased by over 60% since the start of 2026, and daily sign-ups quadrupled. Fortune

Trust is hard to manufacture and easy to lose. Anthropic has spent years building a reputation for being the careful one, the company that turned down a Pentagon contract rather than cross its own ethical lines, that doesn't plaster ads into the tool you're thinking inside. Whether that reputation is fully deserved is a separate question. But perception is the game. And right now, a meaningful number of people feel safer inside Claude than they do inside ChatGPT.

When you choose Claude, you're not just picking a tool. You're signalling something about the kind of AI development you want to support. Whether consciously or not.

Why I moved to Claude

My reasons were not philosophical. They were almost embarrassingly surface-level. I was moved by the algorithm.

I'd been using ChatGPT for years. It knew me. My writing style, my work, the context I'd built up over hundreds of conversations. Switching felt unnecessary. I'd tried Claude a few times and hadn't really seen the difference. They seemed to do the same things, more or less, and ChatGPT had the advantage of already knowing who I was.

But my social media feeds had other ideas. Post after post, designers, developers, content creators, all making the same case for Claude. Not in a sponsored way but in the way people talk about something when they've genuinely changed how they work. I got curious enough to commit to a week.

I haven't gone back yet.

The first thing I noticed was the interface. Claude doesn't feel generic in the way ChatGPT does. There's evidence of considered design, in the colour palette, the typography, the way interactions are paced. I use dark mode almost exclusively, and Claude's palette isn't harsh on the eyes the way OpenAI's tends to be. That sounds like a minor thing but spend a few hours a day inside a tool and the environment starts to matter more than you'd expect.

What kept me there was harder to name. When I ask something ambiguous it offers to clarify, sometimes with structured options, sometimes with a question that reframes what I was actually trying to ask. It doesn't barrel forward with an assumption and it's a small behaviour that changes the quality of what you get back in ways that add up quickly.

The outputs are different too. Claude builds things, structured documents, formatted HTML, proper artefacts, where other tools tend to summarise and leave you to do the rest. And somewhere underneath all of that, harder still to articulate, it feels more considered. More like talking to someone who weighed what they were about to say before saying it.

I considered transferring my history from ChatGPT to Claude and suddenly, knowing me doesn't seem like the advantage I thought it was.

I still use ChatGPT from time to time and Gemini, almost exclusively for research, learning and image generation.

The psychology underneath all of it

Here's what my experience reflects, and what the usage data keeps reinforcing: choosing an AI tool is more of a psychological decision than a rational one. And at least four things are driving it.

We see LLMs as characters, not tools. Within seconds of using a new interface, we attribute a personality to it, which makes sense as they are designed to be talked to. Claude reads as thoughtful and careful. ChatGPT as fast and proactive. Gemini as capable but corporate. None of these portraits are fully accurate, but they stick, and we choose the character we want to spend time with. Psychologists call this the Persona Selection Model: post-training shapes a distinct AI personality that either clicks or clashes with your own. If you're concise and dislike unnecessary warmth, a chatty model will irritate you regardless of how accurate it is.

Confirmation bias decides who's "good." When people test a new LLM, they almost always ask something they already know the answer to. If the model agrees with them, they trust it. If it pushes back, even correctly, they often read that as the model being wrong. This means most people never get an accurate read on which tool is actually better for them. They find the one that validates them most, and settle. Some models are specifically trained for agreeableness and we return to things that reward us, even when they're not serving us well.

We anthropomorphise to fill the gaps. When we don't fully understand how something works, we assign it human traits and use those as proxies for quality. An LLM that uses empathetic language gets trusted more, even though empathy and accuracy have nothing to do with each other in a machine. Tone becomes a stand-in for intelligence. We can't look inside the model, so we read its personality instead.

Our professional identity shapes our choices. The tool you use says something about how you see yourself at work. Developers who want to feel like power users gravitate to less filtered, more raw tools. People who care about responsible AI lean toward products whose brands publicly emphasise safety. We want our tools to reflect our values, or at least our preferred self-image. The choice of LLM has quietly become a form of identity statement.

This isn't about which AI is best

The benchmarks shift every few weeks, so technical superiority arguments have a short shelf life. ChatGPT holds around 60% of the generative AI chatbot market. Claude sits at 3.5%. Electro IQ That gap has much more to do with habit, brand, and psychology than anything a benchmark would tell you.

Claude recently hit number one on Apple's App Store, overtaking ChatGPT Fortune, pushed there by a political controversy around OpenAI's Pentagon contract, a brand moment that resonated with enough people to move the numbers.

People don't choose the best AI. They choose the one that feels most like them.

How I use each one, for what it's worth: Claude is my daily workspace, writing, thinking, building. Gemini for research and images. ChatGPT occasionally, mostly out of muscle memory. Which, fittingly, proves the point.